Rift: The Architecture of a Zero-Config Dev Runner

A deep dive into every decision, trade-off, and data flow behind a CLI tool that wants to kill your multi-terminal workflow.

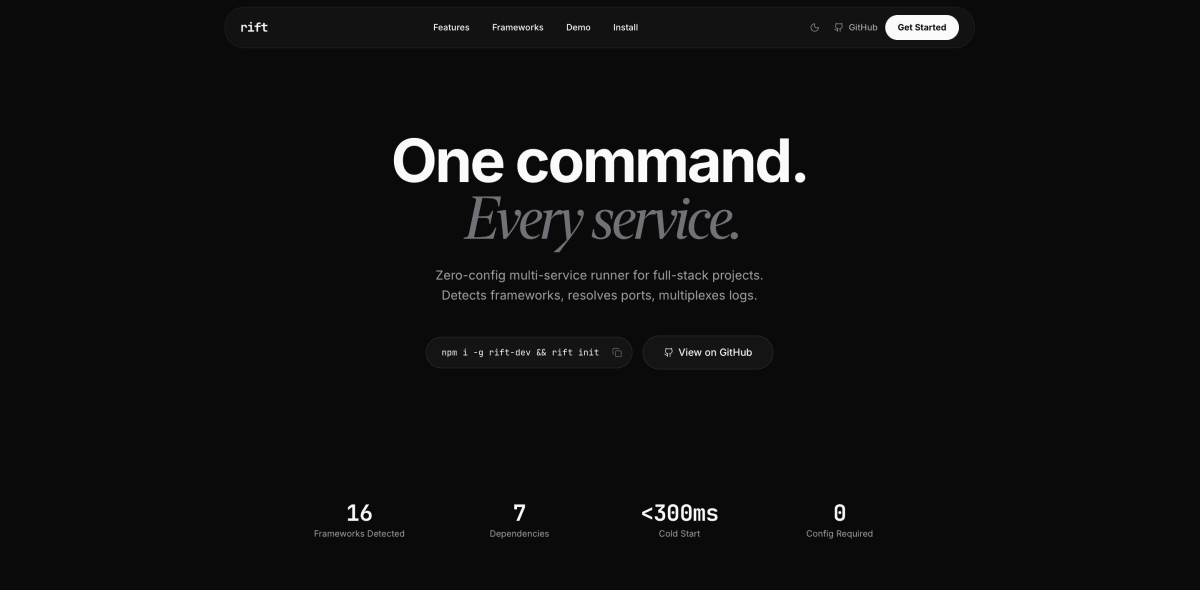

1. The Problem Nobody Solved Correctly

Developers have been running multiple services simultaneously for as long as microservices — and even modest full-stack apps — have existed. The tools are abundant: concurrently pulls roughly 13 million weekly downloads on npm. npm-run-all, Foreman, Overmind, Docker Compose — the list goes on. They all work. They all require you to sit down and write the config file first.

That's the gap Rift targets. Not "how do I run services in parallel?" but "why do I have to tell the tool what my project already knows?"

Every project already contains the information needed to run it: a package.json with next in its dependencies tells you it's a Next.js app that runs on port 3000 via npm run dev. A manage.py next to a requirements.txt with django tells you it's a Django project running python manage.py runserver on port 8000. The frameworks advertise themselves through file markers and dependency declarations. The human just has to read them. Rift automates the reading.

The core thesis is therefore not "a better process runner" but "a process runner that configures itself." Every product decision in Rift flows from this thesis. If a feature requires the user to manually specify something that could be detected, the design document calls it a design failure — literally.

2. The User Journey: What "Zero-Config" Actually Means

The intended experience is three commands across a project's lifetime:

npx rift init # scan, detect, generate rift.yml

npx rift run # start everything, one terminal

npx rift stop # kill everything

With rift status for checking what's alive, and rift fix for when things crash.

The magic moment — and this is explicitly called out as the single most important interaction for adoption — is npx rift init. A developer runs it in their monorepo root. Rift scans directories up to three levels deep, identifies frameworks by file markers and dependency checks, resolves port conflicts between services that share the same default port, propagates port changes into .env files so cross-service URLs stay correct, writes a rift.yml, and prints a summary. All of this under one second without AI, under five seconds with it.

If zero services are detected, Rift refuses to write an empty config file. Instead, it prints an error explaining what it looks for (package.json, requirements.txt, go.mod, Cargo.toml, Gemfile) and hints that setting an ANTHROPIC_API_KEY enables AI detection for non-standard setups. An empty rift.yml would be a broken experience — the user would have to write the config manually, which is exactly the problem Rift exists to solve.

3. The Detection System: Two Strategies, One Fallback Chain

Detection is the core value proposition. It gets the most code, the most tests, and the most architectural attention.

3.1 Rule-Based Detection

The floor. The minimum viable detection. Hardcoded in detect/rules.ts, it covers 15 frameworks across four ecosystems:

JavaScript/TypeScript: Next.js, React (CRA/Vite), Vue, Nuxt, Svelte/SvelteKit, Angular, Express, Fastify, NestJS.

Python: Django, Flask, FastAPI.

Ruby: Rails.

Systems: Go (generic), Rust (generic).

Each framework is identified by a combination of file markers and dependency checks. Next.js, for example, requires either next.config.* as a file marker or next in the dependencies of package.json. Express has no config file at all — it's detected purely from the dependency list. Rails needs both a Gemfile and config/routes.rb.

The important design decision here is that detection never relies on directory name alone. A folder called backend tells you nothing. A folder containing manage.py and a requirements.txt that lists django tells you everything.

3.2 AI Detection

The ceiling. When an ANTHROPIC_API_KEY is available, Rift sends the project's file tree (filtered — no node_modules, .git, etc.) and key config file contents to Claude Haiku via the Anthropic API's tool_use feature. The model returns structured JSON — not free text that needs parsing, but a tool call response that maps directly to the service schema.

The decision to use tool_use over free-text parsing is a meaningful trade-off:

| Approach | Pro | Con |

|---|---|---|

| Free-text + regex parsing | Simpler prompt, cheaper tokens | Fragile, breaks on format drift |

tool_use (structured output) | Guaranteed schema compliance | Slightly more complex prompt setup |

Rift chose structured output because the data has a clear schema (name, path, framework, run command, port) and parsing failures in a zero-config tool would undermine trust in the entire product.

3.3 The Fallback Hierarchy

AI detection available?

├── Yes → Try AI detection

│ ├── Success → Use AI results

│ └── Failure → Fall back to rule-based (silently)

└── No → Use rule-based detection directly

This is the single most important architectural principle in the AI integration: AI enhances, it never gates. Every code path that touches the Anthropic API has a non-AI fallback. If the API is down, if the key is invalid, if the model returns garbage — the tool still works. It just works slightly less well.

The API key resolution order reinforces this:

--api-keyflag (explicit)ANTHROPIC_API_KEYenvironment variable (standard)~/.rift/config.json(persisted)- Skip AI entirely — use rules

No step in this chain is mandatory.

3.4 The Lazy-Loading Decision

The Anthropic SDK adds roughly 200ms to import time. For a tool whose cold-start budget for reaching the first service spawn is 300ms, that's catastrophic. The solution is straightforward: the SDK is never imported at the top level. Both detect/ai.ts and fix/ai.ts use dynamic import() to load it only when AI is actually needed.

This is a classic trade-off between developer ergonomics and user experience. Top-level imports are cleaner code. Lazy imports mean the 90% of runs that don't use AI don't pay the 200ms tax. User experience wins.

4. The Configuration Layer

4.1 The rift.yml Schema

version: 1

services:

api:

path: ./backend

framework: django

run: python manage.py runserver

build: python -m build

test: python manage.py test

install: pip install -r requirements.txt

port: 8000

restart: 5

depends_on: []

env:

DATABASE_URL: postgres://localhost:5432/mydb

The version field is mandatory. This is a forward-looking decision: it allows the schema to evolve without breaking existing configs. If Rift v2 needs a new structure, it can read version: 1 files through a migration layer instead of guessing whether a config is old or new format.

The restart field per-service overrides the global --max-restarts flag. This exists because not all services are equal — a flaky worker might need 5 restart attempts while a database proxy that fails once is genuinely broken.

4.2 Validate at the Boundary, Trust Internally

config/reader.ts is the single validation point. It reads YAML, validates the structure, and returns a typed RiftConfig object. Every downstream function receives an already-validated object and doesn't re-check fields.

rift.yml (YAML string)

→ reader.ts: parse + validate → throws if invalid

→ typed RiftConfig object

→ trusted everywhere downstream

The alternative — defensive checks in every function — leads to code that's simultaneously more verbose and less reliable. You end up with if (!service.path) guards scattered across the codebase, each one a silent admission that you don't trust the data flow.

4.3 Config Roundtrip Integrity

A core test: writeConfig(readConfig("fixture.yml")) must produce identical output. This ensures that Rift can read a config, modify one field, and write it back without corrupting the rest. It's a seemingly small property that prevents an entire class of bugs where reading and writing disagree on formatting, field ordering, or type coercion.

5. Port Conflict Resolution

This is one of the most nuanced subsystems in Rift, because it touches multiple files across multiple services and must maintain cross-service consistency.

5.1 The Problem

Many frameworks share default ports. Next.js, React (Vite), and Vue all default to port 3000. Django and Rails both default to 8000. In a monorepo with a Next.js frontend and a Vite-powered admin panel, both would try to bind to 3000 — and one would fail silently or crash.

5.2 The Resolution Algorithm

During rift init:

Step 1 — Reassign ports. The first service detected on a given port keeps it. Subsequent services get incremented ports. The Next.js frontend stays on 3000; the Vite admin panel moves to 3001.

Step 2 — Rewrite run commands. For frameworks that accept port flags, the run command is rewritten. Next.js gets -p 3001. Vite gets --port 3001. Django gets a positional argument. This means the user doesn't have to know the port flag syntax for each framework.

Step 3 — Inject PORT environment variable. For frameworks like Express and Fastify that read process.env.PORT, the reassigned port is added to the service's env block in rift.yml.

Step 4 — Propagate to .env files. This is the subtle one. Rift scans all services' .env* files for references to the old port — localhost:3000, 127.0.0.1:3000, 0.0.0.0:3000 — and rewrites them to the new port. This ensures that a frontend's NEXT_PUBLIC_API_URL=http://localhost:8000 stays correct when the API service's port changes.

Detected services:

frontend (Next.js) → port 3000

admin (Vite) → port 3000 ← conflict!

api (Express) → port 3000 ← conflict!

After resolution:

frontend (Next.js) → port 3000 (keeps original)

admin (Vite) → port 3001 (run cmd rewritten: --port 3001)

api (Express) → port 3002 (PORT env var injected)

.env propagation:

frontend/.env: API_URL=http://localhost:3002 (was :3000)

admin/.env.local: API_URL=http://localhost:3002 (was :3000)

5.3 Pre-Flight Check During rift run

Resolving conflicts in rift.yml is necessary but not sufficient. Between init and run, a port might get taken by an unrelated process. Rift performs a pre-flight check before spawning any service. If a port is occupied, it fails fast with an actionable hint: run lsof -i :8000 to find the process. No partial startup, no confusing error from a framework that tries to bind and fails.

6. The Process Runner

6.1 Two Entry Points, One Engine

runner/lifecycle.ts exports two functions:

startServices()— spawns all services, returnsManagedProcess[], does not block. Used by the MCP server'srift_starttool.startAll()— callsstartServices(), then adds signal handlers and a wait loop. Used byrift run.

This split exists because the MCP server and the CLI have fundamentally different lifecycle requirements. The CLI owns the terminal and must block until the user exits. The MCP server returns a response and moves on — the spawned processes persist independently, tracked by PID file.

6.2 Dependency Ordering

Services declare dependencies via depends_on. Rift resolves these into a topological order using Kahn's algorithm — a standard approach implemented inline in about 20 lines. No graph library.

The decision to implement this inline rather than pulling in a dependency is explicit: the algorithm is well-understood, the implementation is short, and adding an 8th dependency to the project requires justification in the PR description. The dependency budget is seven. This constraint forces the team to evaluate whether each package earns its place.

Dependency graph:

frontend → api → database

Start order:

1. database (no dependencies)

2. api (waits for database port to accept connections)

3. frontend (waits for api port to accept connections)

The wait-for-port mechanism is important: Rift doesn't just start services in order — it waits for the previous service's port to accept connections before starting the next. This prevents the common failure mode where a frontend starts before its API is ready and immediately fails health checks.

6.3 Auto-Restart with Exponential Backoff

When a service crashes (non-zero exit code), Rift restarts it automatically:

Attempt 1: wait 1 second → restart

Attempt 2: wait 2 seconds → restart

Attempt 3: wait 4 seconds → restart

(default max: 3 attempts)

The backoff doubles each attempt: 1s → 2s → 4s → 8s. After exhausting all attempts, Rift prints a message suggesting npx rift fix and gives up.

No cascade restart. When a service crashes, only that service restarts — not its dependents. This matches Docker Compose behavior and avoids restart storms where a crashing database triggers restarts of every service that depends on it, which all crash again because the database is still down, which triggers more restarts.

6.4 Log Multiplexing

The terminal output during rift run is the product. Each service's stdout and stderr are multiplexed into a single stream with color-coded, left-padded prefixes:

api Watching for file changes with StatReloader

frontend ready - started server on 0.0.0.0:3000

api System check identified no issues.

frontend ✓ compiled in 1.2s

Color assignment is deterministic: the service name is hashed to pick an index from a fixed palette (cyan, magenta, yellow, green, blue, red). The same service always gets the same color across runs. This is a small detail with outsized usability impact — developers learn to associate color with service, making it faster to scan logs visually.

Service logs are also written to .rift/logs/<service>.log for later analysis by rift fix. Writes are buffered to reduce disk I/O.

6.5 Signal Handling

rift run traps SIGINT (Ctrl+C) and SIGTERM. On receiving either:

- Forward SIGTERM to all child processes

- Wait for each to exit

- Clean up the PID file (

.rift/pids.json)

The PID file lives in the project root under .rift/, not in /tmp. This is deliberate: it keeps process state co-located with the project, allows multiple Rift instances across different projects without collision, and survives system-level tmp cleanups.

7. The Crash Diagnosis System (rift fix)

7.1 Architecture

rift fix is a two-stage pipeline:

Stage 1 — Diagnosis. Read crash logs from .rift/logs/, analyze them, produce structured diagnosis results. The diagnose() function returns data — it doesn't print anything. This makes it reusable by both CLI and MCP.

Stage 2 — Presentation (or application). In CLI mode, fix() pretty-prints the diagnosis. With --apply, applyFixes() executes the suggested commands.

.rift/logs/*.log

→ diagnose()

├── AI available? → Claude Haiku (tool_use, structured JSON)

│ last 200 lines per service + config + project files

├── No AI? → Pattern matching (10 common errors)

└── No match? → Generic hint ("set ANTHROPIC_API_KEY for AI diagnosis")

→ DiagnosisResult[]

├── fix() → pretty-print for terminal

└── applyFixes() → execute fix_command per diagnosis

7.2 Pattern Matching: The Non-AI Fallback

fix/hints.ts matches 10 common error patterns:

| Pattern | Problem | Suggested Fix |

|---|---|---|

EADDRINUSE | Port already in use | lsof -i :<port> |

MODULE_NOT_FOUND | Missing npm package | npm install |

Permission denied | File permission issue | — |

no such table | Missing database migration | Run migrations |

ImportError / ModuleNotFoundError | Missing Python package | pip install -r requirements.txt |

ECONNREFUSED | Dependency service down | Check dependent services |

| Native module error | Binary incompatibility | rm -rf node_modules && npm install |

out of memory / heap | Memory exhaustion | Increase Node memory limit |

SyntaxError | Code syntax error | — |

dylib / .so failure | Shared library issue | — |

Where actionable, hints include a fix_command that --apply can execute automatically. MODULE_NOT_FOUND produces npm install. ImportError produces pip install -r requirements.txt. Native module errors produce rm -rf node_modules && npm install.

7.3 AI Diagnosis

With an API key, Rift sends the last 200 lines of each crashed service's log, plus the service config and project files (package.json, requirements.txt), to Claude Haiku. The 200-line limit is a token budget decision — keeping the payload under 15K tokens keeps costs at roughly $0.001 per call while providing enough context for meaningful diagnosis.

The response comes back as a structured tool call: problem summary, explanation, and suggested fix command. No free-text parsing.

7.4 The --apply Flag

rift fix --apply takes the diagnosis results, filters to entries that have a fix_command, resolves each service's path from the config for the working directory, and executes the command via execa:

execaCommand(cmd, { cwd, shell: true, reject: false })

The reject: false is important: it means a failing fix command doesn't throw an exception. Instead, the result includes the exit code and output, allowing Rift to report which fixes succeeded and which failed without crashing itself.

8. The MCP Server

8.1 Why MCP?

The Model Context Protocol allows AI agents (Claude Code, other MCP-compatible clients) to interact with Rift programmatically. Instead of the agent parsing terminal output, it calls structured tools and receives structured JSON.

8.2 Six Tools, One Codebase

The MCP server (src/mcp.ts) exposes six tools that reuse the exact same internal functions as the CLI:

| MCP Tool | Internal Function | Purpose |

|---|---|---|

rift_detect | detect() + resolvePortConflicts() + writeConfig() | Scan and configure |

rift_status | readPidFile() + processAlive() | Check running services |

rift_start | startServices() | Spawn services (non-blocking) |

rift_stop | stopServices() | Kill everything |

rift_diagnose | diagnose() | Read crash logs, diagnose |

rift_fix_apply | diagnose() + applyFixes() | Diagnose and execute fixes |

The code sharing is total. The MCP server is not a separate implementation — it's a different interface to the same engine. This eliminates an entire class of bugs where CLI and MCP behavior diverge.

8.3 The Logger Swap

The CLI writes colored, formatted output to the terminal. The MCP server can't do that — it needs to collect output into a data structure. The solution is createCollectingLogger(), which has the same interface as the terminal logger but appends to an array instead of writing to stdout/stderr.

This is a textbook example of the strategy pattern applied without any pattern machinery. Two functions with the same signature, selected at call time. No interface declaration, no factory, no dependency injection container.

8.4 Process Lifecycle for MCP

Services spawned via rift_start persist independently of the MCP call. They're tracked via the same PID file as CLI-spawned services. The MCP server registers a process.on("exit") handler that SIGTERMs all children spawned during the session — a safety net to prevent orphaned processes.

9. The --json Flag: Structured Output Everywhere

Every command supports --json for structured output. When set, all human-readable output is suppressed — no colors, no prefixed lines, no hints. Only JSON goes to stdout.

rift fix --json | jq '.diagnoses[0].fix_command'

The implementation discipline is strict: when --json is active, riftLog() becomes a no-op. Only jsonOut() — which calls process.stdout.write(JSON.stringify(...)) — writes to stdout. This prevents garbled output for pipe-based consumers.

This exists for two audiences: AI agents (which parse JSON natively) and CI pipelines (which can integrate Rift into automated workflows). It's also why library functions throw errors instead of calling process.exit() — the --json handler needs to catch the error and include it in the JSON output.

10. The Dependency Budget

Rift has seven dependencies. Adding an eighth requires justification in the PR description. This is not a soft guideline — it's a rule.

| Dependency | Purpose | Justification |

|---|---|---|

commander | CLI framework | Industry standard, stable API |

yaml | Parse/write rift.yml | More spec-compliant than js-yaml |

picocolors | Terminal colors | 2KB, zero deps (Chalk has ESM issues) |

execa | Process spawning | Better errors/API than raw child_process |

@anthropic-ai/sdk | AI detection + diagnosis | Official SDK, lazy-loaded |

@modelcontextprotocol/sdk | MCP server | Required for MCP integration |

zod | Schema validation | Required by MCP SDK |

The choices are revealing. picocolors over Chalk — because Chalk's ESM transition caused compatibility headaches across the Node ecosystem, and picocolors does the same job in 2KB with zero dependencies. yaml over js-yaml — because YAML spec compliance matters when you're generating config files that other tools might consume. execa over raw child_process — because process spawning is Rift's core operation and better error messages justify the dependency.

The Anthropic SDK and MCP SDK are mandatory for their respective features. Zod is pulled in by the MCP SDK, not by choice. If it weren't a transitive requirement, it wouldn't be here.

11. The File Architecture: 19 Files, 200 Lines Each

src/

├── index.ts # Entry point — shebang, nothing else

├── cli.ts # Commander setup, all commands, --json

├── mcp.ts # MCP server entry, 6 tools, shebang

├── utils.ts # Shared helpers (resolveApiKey)

├── detect/

│ ├── index.ts # Orchestrator: try AI → fall back to rules

│ ├── rules.ts # Hardcoded detection

│ └── ai.ts # Claude API detection (lazy-loaded)

├── runner/

│ ├── index.ts # PID file ops, stop, re-exports

│ ├── lifecycle.ts # startAll, startServices, spawn, restart, deps

│ ├── logger.ts # Multiplexed colored log prefixing + file logging

│ ├── monitor.ts # CPU/memory via ps

│ └── ports.ts # Port availability, conflicts, .env propagation

├── config/

│ ├── schema.ts # TypeScript types, version: 1

│ ├── reader.ts # Parse rift.yml → typed object

│ └── writer.ts # Typed object → rift.yml

└── fix/

├── index.ts # Orchestrator: diagnose() returns data

├── apply.ts # Execute fix commands

├── ai.ts # AI diagnosis via Claude Haiku

└── hints.ts # Non-AI pattern matching

The 200-line rule is enforced: if a file grows past 200 lines, split it. This isn't about aesthetics — it's about cognitive load. A 200-line file fits in a single editor viewport. You can understand the entire module without scrolling. It also forces clean separation of concerns: you can't have a 500-line God module that does detection, validation, and file writing when the cap is 200.

The directory structure maps to concepts, not layers. There's no services/, models/, controllers/ abstraction. There's detect/ (detection), runner/ (process management), config/ (configuration), and fix/ (crash diagnosis). Each directory is a bounded context.

12. The Coding Philosophy

12.1 KISS — Keep It Simple, Strictly

Functions do one thing. detectWithRules() detects with rules. writeConfig() writes config. If you're naming a function detectAndValidateAndWrite, you've already violated the principle.

Flat over nested. Early return over else chains. No classes unless you need inheritance or stateful lifecycle. The codebase is free functions and plain objects.

12.2 YAGNI — Build What's Needed Now

No plugin system. No event emitters. No middleware chains. No hook architectures. The "What Not To Build" section is as important as the "What To Build" section: watch mode, daemon mode, plugin system, TUI dashboard, Windows support, cloud features — all explicitly deferred.

12.3 DRY — But Not at the Cost of Clarity

Duplicate code is acceptable if it's 2-3 lines and the abstraction would obscure intent. Extraction happens only when a pattern repeats 3+ times AND represents the same concept. Similar-looking code that does conceptually different things stays duplicated.

12.4 Explicit Over Clever

// Yes

const isRunning = pid !== null && processExists(pid);

// No

const isRunning = !!pid && !!processExists(pid);

The double-bang pattern is shorter but hides intent. The explicit comparison says what it means. In a tool where process state management is critical, clarity in boolean logic is worth the extra characters.

13. Error Handling: A Two-Layer System

Library code (reader, lifecycle, detection) throws with human-readable messages:

throw new Error(`service "${name}" is missing required field "path"`);

CLI commands catch and format for terminal:

try {

const config = readConfig(configPath);

} catch (err) {

riftLog(`error: ${(err as Error).message}`);

process.exit(1);

}

This split exists because three consumers need to handle errors differently:

- CLI — print to stderr, exit with code 1

--jsonmode — serialize the error into JSON output- MCP server — return

{ isError: true, content: [...] }

If library code called process.exit(), the MCP server would crash instead of returning an error response. By throwing, each consumer gets to handle errors in its own way.

Exit codes are also specified: 0 for success, 1 for user error, 2 for system error. Stack traces are suppressed unless --verbose is set. Errors are for users, not developers.

14. Performance Budgets

| Operation | Budget | Rationale |

|---|---|---|

npx rift run cold start (no AI) | < 300ms to first spawn | Feels instant; competing tools are slower |

rift init with AI | < 5 seconds | Includes API round-trip; acceptable for one-time setup |

rift init without AI | < 1 second | Must feel faster than writing config by hand |

rift status | < 100ms | Reading a JSON file and checking PIDs — should be trivial |

These aren't aspirations. The CLAUDE.md states: "If a code change regresses startup time past these thresholds, it's a bug."

The 300ms cold-start budget is the reason the Anthropic SDK is lazy-loaded. Without lazy loading, the SDK's 200ms import time would consume two-thirds of the budget before any user code runs. The remaining 100ms for directory scanning, config reading, and process spawning would be extremely tight.

15. The CLI Output as Product

No spinners for operations under 500ms. No emoji. No ASCII art. Clean, professional, informative.

Every line of Rift output is prefixed with rift in dim gray. This distinguishes Rift's own messages from the output of the services it's running. During rift run, where multiple services are writing to the same terminal, this visual separation is critical.

The output examples in the CLAUDE.md are not suggestions — they're specifications. The exact format of rift init output, rift run multiplexed logs, auto-restart messages, rift status tables, rift fix diagnostics, and error messages are all defined. This level of output specification is unusual for a CLI tool, but it reflects the philosophy that the terminal output is the user interface.

16. Directory Scanning Rules

Scanning is bounded and opinionated:

Depth limit: 3 levels. Deeper nesting is rare enough that AI detection can handle it. The depth limit keeps rule-based scanning fast.

Always skip: node_modules, .git, dist, build, .next, .nuxt, __pycache__, .venv, venv, target, .rift, vendor. These are output directories, dependency caches, or Rift's own state. Scanning them would be slow and produce false positives.

Service candidates: Any subdirectory with a package.json, requirements.txt, go.mod, Cargo.toml, or Gemfile. The root directory is also a candidate. This set of files covers the five major ecosystem entry points: Node.js, Python, Go, Rust, Ruby.

Monorepo detection: If the root has a workspaces field in package.json, a pnpm-workspace.yaml, or a packages/ or apps/ directory, scan those workspace directories as service candidates. This handles the standard monorepo layouts (Yarn workspaces, pnpm workspaces, Turborepo/Nx conventions) without requiring configuration.

17. Security Model

Rift executes shell commands defined in rift.yml. This is the same trust model as Makefiles, npm scripts, and Docker Compose — the user should review the config before running it in an untrusted project.

Specific security decisions:

- No secrets in

rift.yml. Theenvfield is for non-sensitive config (ports, local database URLs). This is documented in generated configs via a YAML comment. .rift/pids.jsoncontains only PIDs. No sensitive data..rift/logs/may contain sensitive data from service output..rift/is added to.gitignoreduringrift init.rift initcreates or appends to.gitignoreto ensure.rift/never gets committed.

18. Testing Strategy

Detection is the core value. It gets the most test coverage.

tests/

├── detect/

│ ├── rules.test.ts # One test per framework, fixture directories

│ └── fixtures/ # Minimal marker files, no actual source code

├── config/

│ ├── reader.test.ts # Valid, invalid, missing fields, version mismatch

│ └── writer.test.ts # Roundtrip integrity

├── runner/

│ ├── ports.test.ts # Conflicts, resolution, .env propagation

│ ├── lifecycle.test.ts # Backoff calculation, dependency ordering

│ └── monitor.test.ts # ps output parsing

└── fix/

└── hints.test.ts # Pattern matching for common errors

Key testing decisions:

Detection fixtures are minimal. Each fixture is a directory with just enough files to trigger detection — a package.json with next in dependencies and a next.config.js. No actual source code. This keeps tests fast, focused, and easy to understand.

AI detection tests mock the SDK. They verify prompt construction (what files and content are sent) and fallback behavior (what happens when the API fails). They don't test the model's intelligence — that's Anthropic's problem.

No unit tests for process spawning. That's integration test territory. Unit tests cover detection logic, config parsing, port conflict resolution, backoff calculation, dependency ordering, and error pattern matching — the pure logic that can be tested without spawning real processes.

19. The Complete Data Flow

rift init

User runs `npx rift init`

→ Scan directories (max 3 levels, skip node_modules etc.)

→ For each directory with a package.json/requirements.txt/go.mod/Cargo.toml/Gemfile:

→ Check file markers + dependency lists

→ Match against 15 known frameworks (rules)

→ If AI key available: send file tree + configs to Claude Haiku

→ Fall back to rules if AI fails

→ Collect detected services

→ If zero services: print error + hints, exit (never write empty config)

→ Resolve port conflicts:

→ First service keeps original port

→ Later services get incremented ports

→ Rewrite run commands with port flags

→ Inject PORT env vars

→ Propagate port changes to .env files

→ Write rift.yml

→ Add .rift/ to .gitignore

→ Print summary + next step hint

rift run

User runs `npx rift run`

→ Read and validate rift.yml

→ Pre-flight port check (all ports available?)

→ If port taken: fail fast with lsof hint

→ Resolve dependency order (topological sort)

→ For each service in order:

→ Spawn process (execa)

→ Wait for port to accept connections

→ Start multiplexed log output (color-coded prefix)

→ Write logs to .rift/logs/<service>.log

→ Store PIDs in .rift/pids.json

→ Trap SIGINT/SIGTERM

→ On service crash:

→ Exponential backoff restart (1s → 2s → 4s)

→ Max 3 attempts (configurable)

→ On exhaustion: suggest `npx rift fix`

→ On signal:

→ SIGTERM all children

→ Wait for exit

→ Clean up PID file

rift stop

User runs `npx rift stop`

→ Read .rift/pids.json

→ For each PID:

→ Send SIGTERM

→ Verify exit

→ Clean up PID file

→ Return { stopped: [...], alreadyStopped: [...] }

rift fix

User runs `npx rift fix`

→ Read .rift/logs/*.log

→ For each crashed service:

→ If AI key available:

→ Send last 200 lines + config + project files to Claude Haiku

→ Receive structured diagnosis (problem, explanation, fix_command)

→ Else:

→ Pattern-match against 10 common errors

→ Return problem + suggestion + optional fix_command

→ Fall back to generic hint if no match

→ Print diagnosis

With --apply:

→ Filter to diagnoses with fix_command

→ For each:

→ Resolve service path from config

→ Execute fix_command via execa (shell: true, reject: false)

→ Report success/failure

20. Conclusion: The Opinionated Tool

Rift is not a framework. It's not a platform. It's a CLI tool with 19 files, 7 dependencies, and a very specific opinion about what running a full-stack project should feel like.

The opinions are everywhere: in the 200-line file cap that forces clean module boundaries, in the dependency budget that requires justification for each new package, in the performance budgets that treat regressions as bugs, in the output format that specifies exact terminal output down to the padding of service names, and in the AI integration that enhances but never gates.

Every trade-off points the same direction: the user's experience of running npx rift init in a project they've never configured for parallel execution, and seeing it just work. That's the product. Everything else is engineering in service of that moment.

Ahmed essyad

the owner of this space

A nerd? Yeah, the typical kind—nah, not really.

View all articles by Ahmed essyad→Comments

If this resonated

I write essays like this monthly.