Your Test Suite Is Lying to You

I built a 4-agent testing pipeline that scans a codebase, ranks what matters, writes the tests, and validates them. No human in the loop except two checkpoints. Here's everything I learned about what actually matters in testing — and what the research backs up.

Coverage Is a Vanity Metric

Google studied thousands of internal projects. The sweet spot? 60-75% coverage. Not 100. Not 90.

The relationship between coverage and bug detection is logarithmic. Going from 30% to 70% is where nearly all the value lives. Going from 90% to 95%? You're writing tests that cost more to maintain than the bugs they'd ever catch.

But here's the part that should bother you: researchers at the University of Waterloo generated 31,000 test suites across 5 large systems. When they controlled for test suite size, coverage had only a low to moderate correlation with actually catching bugs.

You can hit 100% coverage with tests that assert nothing. Coverage measures execution, not correctness.

Pair it with mutation testing or stop pretending the number means something. Tools like Stryker or mutmut inject small bugs into your code and check if your tests notice. If your tests still pass after the code is broken — your tests are theater.

You're Testing the Easy Stuff

Less than 5% of code accounts for more than 90% of execution in most applications. Yet teams spread their testing effort evenly, or worse — they test what's easy instead of what matters.

This is why the first agent in my pipeline is a scanner that ranks endpoints by business impact × failure likelihood. Not by what's convenient. Not alphabetically. By what actually hurts when it breaks.

The priority order, based on where production incidents actually come from:

Payment and billing logic — a bug here costs real money, immediately. Auth — a bug here is a security incident. Data mutations — writes, deletes, state changes. A read bug is annoying. A write bug corrupts data. High-traffic reads — your availability risk. Third-party integrations — statistically where the most production failures happen. Your code is fine. The network between you and Stripe is not. Recently changed code — defects correlate with change, not complexity.

A health check endpoint at 100% coverage while your payment flow has zero tests is not a tested application. It's a tested health check.

The Pyramid vs. Trophy Thing

I'm not going to relitigate this. Mike Cohn said pyramid. Kent C. Dodds said trophy. Martin Fowler said in 2021 that you're both arguing about words you haven't defined. He's right.

What Dodds calls an "integration test" is what Fowler calls a "sociable unit test." The shape of your test suite depends on your architecture, not on a diagram someone drew in 2009.

Microservices? Integration tests at service boundaries give you the most confidence. Complex domain logic? Unit test the pure business rules. Frontend SPA? Component integration tests. Monolith with a database? Sociable unit tests hitting a real DB.

Stop debating shapes. Test the behavior your users depend on.

Don't Mock Your Database

I shouldn't have to explain this.

Teams mock their database in integration tests, every test passes, production breaks because the real database enforces constraints the mock doesn't know about. I've seen this kill weekends. I've debugged this at 2am.

In-memory databases are nearly as bad. Your SQLite test won't catch that your Postgres JSON query is malformed, that your migration breaks a constraint, or that your transaction isolation causes a deadlock.

The answer is Testcontainers. Real databases, real message queues, real Redis, running in Docker during your test suite. The setup cost is a couple hours. The payoff is catching the entire class of bugs that mocks structurally cannot catch.

My test-writer agent follows a simple rule: mock what you don't own (Stripe, SendGrid — use their test modes when available), never mock your own database, never mock the thing you're testing. That's it. Three rules.

The 6 Mistakes That Actually Cost You

After building the pipeline and watching it chew through dozens of codebases, these are the patterns that keep showing up:

Testing implementation instead of behavior. You assert that an internal method was called with specific arguments. You refactor. Every test breaks. Nothing is actually broken. Your team stops trusting tests. Fix: test inputs and outputs. If you can refactor without changing behavior and tests break, your tests are coupled to structure, not correctness.

Overmocking. You mock every dependency. Tests pass. Production fails. The mock didn't replicate the real failure mode. Fix: only mock what you don't own.

Chasing coverage numbers. You write tests that execute code without meaningful assertions. Coverage goes up. Bug rate stays the same. Management is happy. Users are not. Fix: mutation testing. Full stop.

Ignoring flaky tests. A test fails intermittently. The team re-runs CI until it passes. Now you have a test suite everyone ignores. This is worse than no tests — it's false confidence. Quarantine them immediately. Fix root causes within the week. Not next sprint. This week.

Sharing state across tests. Test A writes to the database. Test B reads it. Tests pass in order, fail in parallel. Welcome to debugging test infrastructure instead of product code. Each test sets up and tears down its own state. Period.

Integration tests for everything. Your CI takes 45 minutes. Nobody runs tests locally. PRs sit waiting. Developers push without testing. You've accidentally created a culture where tests are someone else's problem. Unit test pure logic. Integration test boundaries. E2E only the 2-3 most critical user flows.

The Stack in 2026

If you're starting fresh:

TypeScript/JS — Vitest for unit (10-20x faster than Jest, native TS/ESM, 14M weekly downloads and growing), Testcontainers + Vitest for integration, Playwright for E2E. There is no technical reason to choose Jest for new projects anymore.

Python — pytest for everything unit-side, testcontainers-python for integration, Playwright for E2E.

Go — stdlib testing + testify, testcontainers-go for integration.

Java — JUnit 5 + Mockito, Testcontainers.

.NET — xUnit + Moq, Testcontainers for .NET, Playwright.

The Pipeline

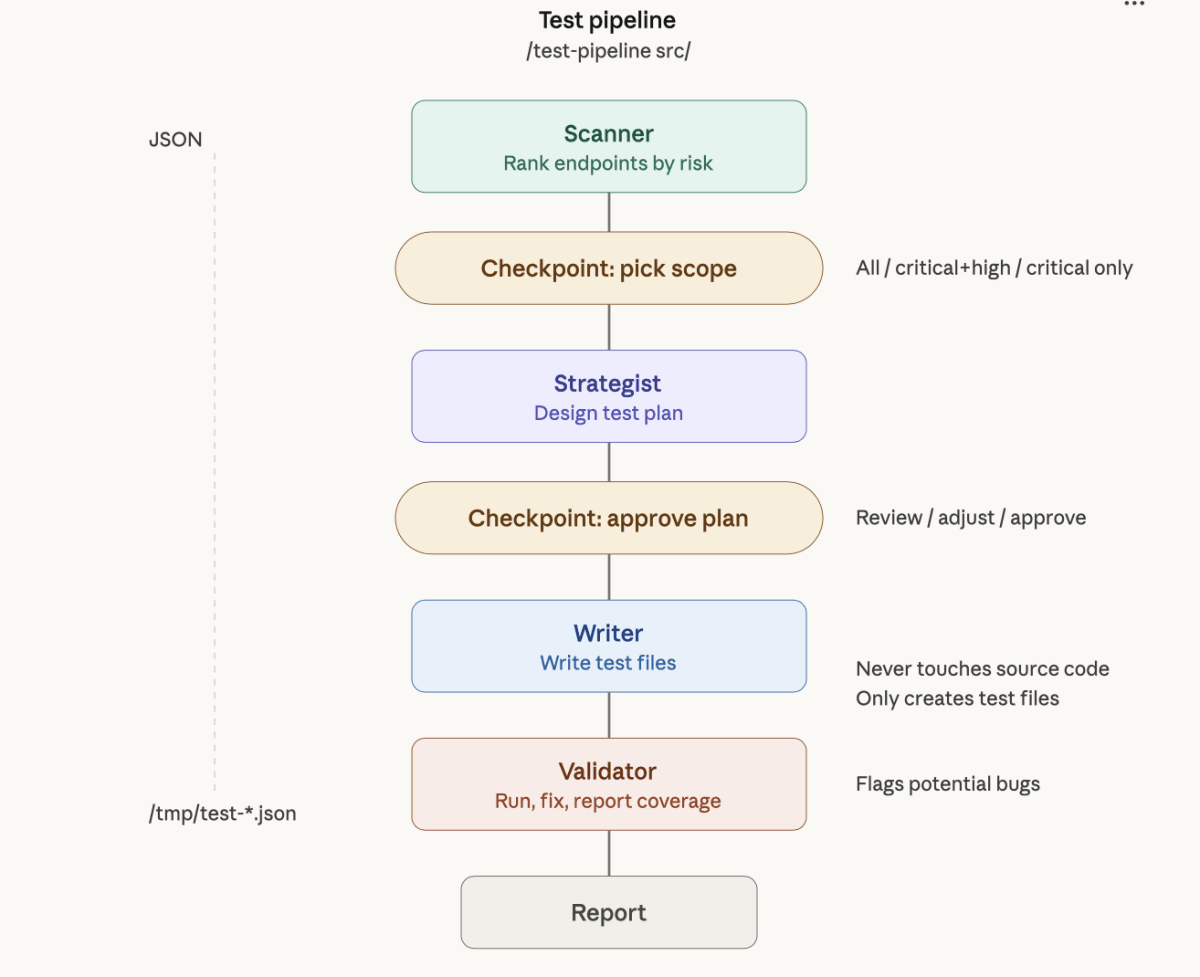

I built this because I got tired of having the same arguments about what to test and how. Four agents, one orchestrator:

Scanner — crawls the codebase, identifies every endpoint and handler, ranks them by risk. Business impact times failure likelihood. Spits out a prioritized target list.

Strategist — reads the scan report, designs the test plan. What type of test, what assertions, what to mock, what edge cases to cover. You get a checkpoint here to approve or adjust before any code gets written.

Writer — reads the strategy, writes actual test files. Auto-detects your framework, follows your project conventions. Knows the three rules about mocking.

Validator — runs the tests, fixes import and mock errors, flags potential source code bugs it discovered, reports coverage.

The whole thing communicates via JSON files, never touches source code, and only creates test files. Two human checkpoints: one after the scan to pick scope, one after the strategy to approve the plan.

/test-pipeline src/ and walk away.

The goal was never more tests. It's the right tests, on the right code, catching real bugs. Everything in this article is baked into those four agents.

Ahmed essyad

the owner of this space

A nerd? Yeah, the typical kind—nah, not really.

View all articles by Ahmed essyad→Comments

If this resonated

I write essays like this monthly.